The Problem: When Search Systems Don’t Speak Human

Place search has always struggled with a fundamental challenge: the gap between human intent and machine understanding. People describe what they want in natural, human terms, but most search systems speak in terms of categories, filters, and structured parameters like price ranges, and rating thresholds. Ask someone where to find “a cozy spot for a first date,” and they know exactly what you mean. Ask a traditional search API the same question, and it fails to understand the intent. These queries communicate atmospheric qualities and experiential expectations that cannot be easily translated into category and parameter constraints.

The Solution: Natural Language Search That Actually Understands

Today we’re excited to announce the launch of the Foursquare Ask API, a natural language place search endpoint that fundamentally reimagines how developers build place discovery experiences. Instead of forcing users to translate intent into filters, the API speaks human. The API interprets the intent, identifies relevant concepts, and returns places that match what the user actually means.

The API delivers three transformative capabilities for developers:

- Context-Aware Personalization Without Profiles: The optional context parameter enables query-specific personalization without requiring stored user profiles—a critical design decision for privacy and flexibility. Queries like “dinner near me” with context “allergic to gluten” deliver tailored results without user accounts or preference histories. The system treats contextual preferences as ranking signals, not absolute constraints—preserving serendipitous discovery while respecting stated needs.

- Transparent Justifications for Every Recommendation: Every recommendation comes with clear explanations of why a venue matched, citing specific user tips and relevant content rather than opaque similarity metrics. This transparency enables informed decision-making and establishes trust through complete auditability of the recommendation logic.

- Intelligent Adaptive Performance: The system automatically determines the appropriate execution strategy based on query complexity, without putting the onus on the end-user to make a decision between fast and thinking modes. Simple categorical searches execute in sub-200ms—matching traditional search performance. Complex multi-constraint queries like “somewhere my vegetarian friend and meat-loving husband will both enjoy” automatically trigger agentic reasoning, a multi-step process that breaks down competing requirements, evaluates trade-offs, and synthesizes balanced recommendations—completing in under 2 seconds.

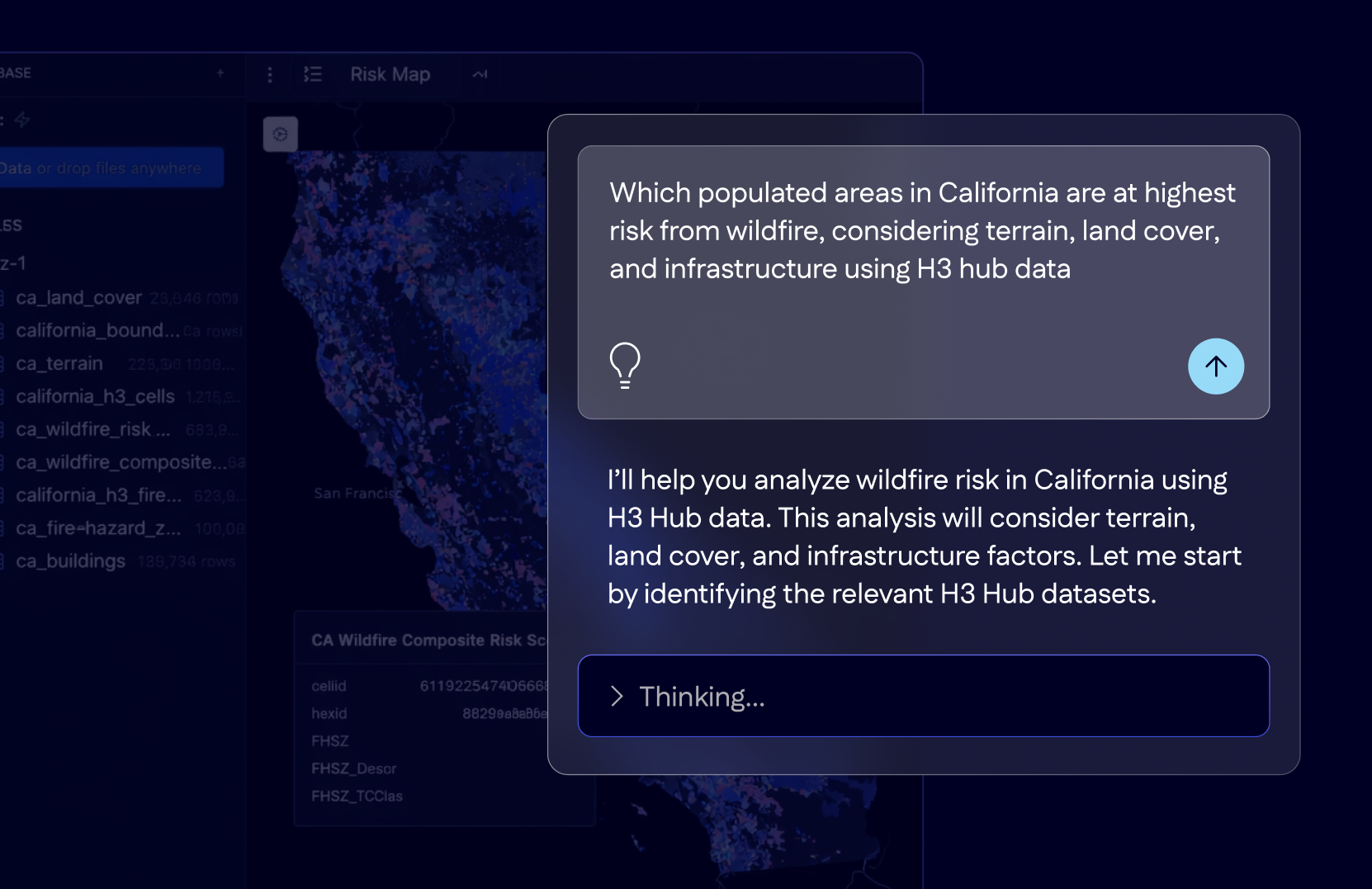

Real-World Example: Finding the Perfect Anniversary Dinner

Traditional search APIs struggle with queries like “romantic Italian restaurant in Brooklyn for an anniversary dinner.” They might match “Italian” and “Brooklyn,” but miss the intent entirely. The Ask API recognizes what the user actually means: not just any Italian restaurant, but one with a specific atmosphere (romantic), suited to a special context (anniversary), in a particular location.

It first identifies concepts relevant to the user query like “date night,” “intimate setting,” and “candlelit atmosphere,” and finds the venues that genuinely match these characteristics. Then, it comes back with multiple recommendations ranked by relevance and each with a reason for why it is recommending that venue. Here’s what a typical recommendation looks like:

Locanda Vini & Olli

- "Perfect for a romantic anniversary dinner. Known for its intimate candlelit atmosphere."

Why this venue?

- 15 users mention "romantic atmosphere" including: "The candlelit tables are incredibly romantic..."

- Ranked #2 for romantic places in BrooklynNotice what makes this different: you’re not just getting a list of Italian restaurants. You’re getting venues that match the intent behind your query, with transparent explanations showing exactly why each place was recommended. Every recommendation cites real user experiences, not opaque algorithms.

Technical Deep Dive: Building Explainable Natural Language Search

The capabilities demonstrated above—natural language understanding, transparent justifications, sub-200ms performance—required solving a fundamental architectural challenge. Traditional approaches force developers to choose between semantic intelligence and explainability. Vector search delivers nuanced understanding but operates as a black box. Structured search provides transparency but struggles with linguistic variation. We needed both. The obvious solution seemed straightforward: leverage modern LLMs by embedding all user tips as vectors, then perform similarity search at query time. This is how most semantic search systems work today—elegant, simple, and powerful. But this approach introduced three critical problems that would undermine our core requirements for transparency and performance.

Why Simple Vector Search Falls Short

Pure vector search fails for natural language place discovery in three fundamental ways:

- Noise dilutes signal: Real user tips mix valuable information with conversational noise. Consider: “Went here with my friend Sarah last Tuesday, the outdoor seating was nice but the waiter was slow”. This tip contains one useful signal, “outdoor seating”, buried in irrelevant context.

- No aggregation: Venue characteristics emerge from consensus. Fifty independent users mentioning “outdoor seating” provides far stronger evidence than one eloquent description. But pure vector search treats each tip as an independent document. There’s no mechanism to recognize that 50 separate tips all reference the same attribute.

- Opaque black box: Vector similarity returns a score (e.g., 0.87) without explanation. Users can’t understand why a venue matched. Developers can’t debug unexpected results. The reasoning chain is completely opaque: you get a number, not an explanation citing specific tips and confidence levels.

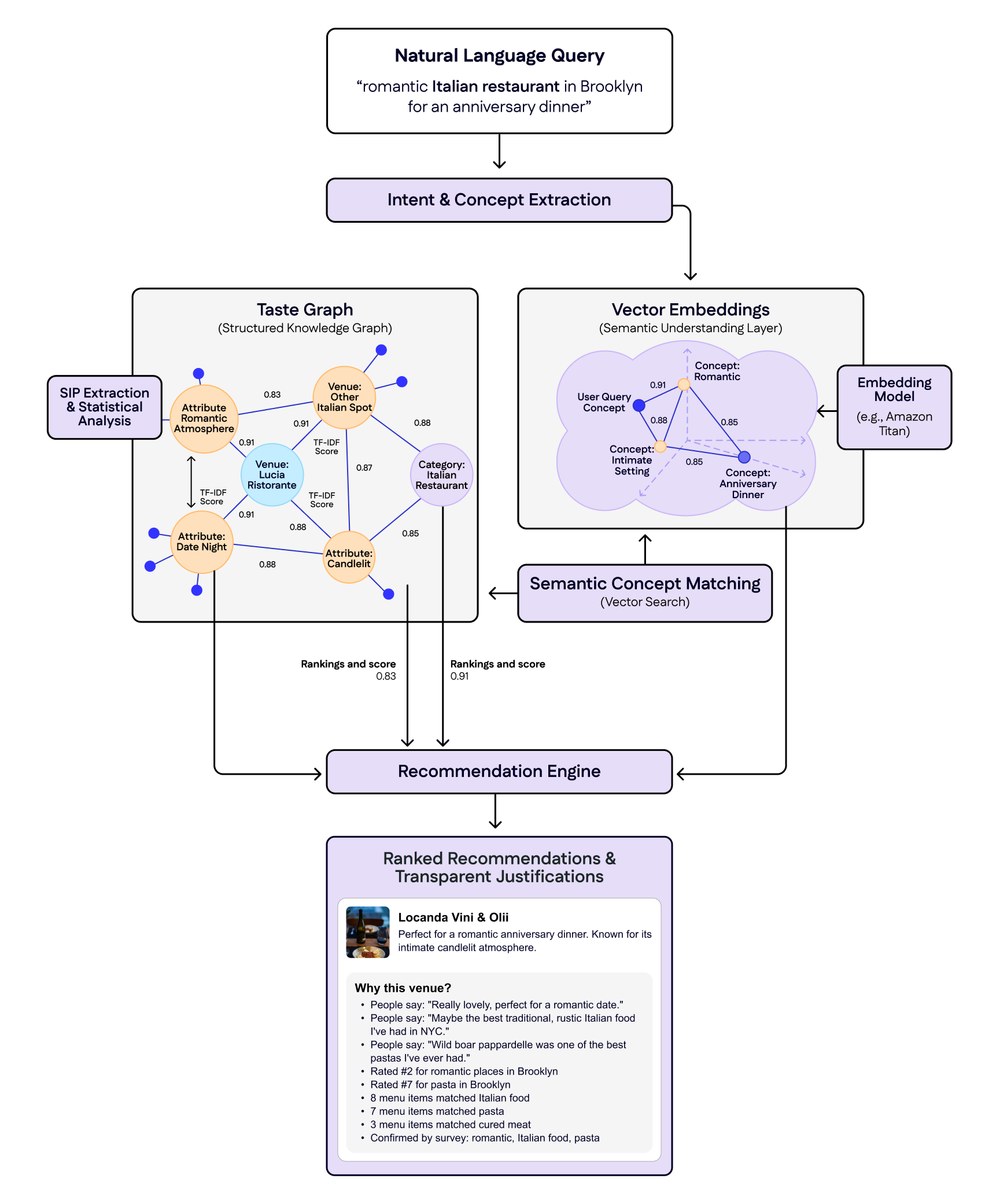

Our Hybrid Approach: Knowledge Graph + Vector Embeddings

Pure vector search couldn’t deliver the transparency and performance we needed. Our solution combines two complementary layers:

The Taste Graph extracts structured concepts from unstructured user content through statistical analysis. Statistically Interesting Phrase (SIP) identifies meaningful terms: “latte art” appearing 100x more in coffee shop tips than everyday conversation gets flagged as signal, while “went here last Tuesday” is filtered as noise. TF-IDF (Term Frequency-Inverse Document Frequency) then quantifies attribute strength: 25 mentions of “latte art” (rare and distinctive) score higher than 100 mentions of “good coffee” (common everywhere).

Vector Embeddings translate natural language to structured concepts. We generate 1024-dimensional embeddings for every Taste Graph concept, enabling semantic matching: “romantic” maps to “Date Night” (0.89 similarity), “Intimate Atmosphere” (0.86), and “Candlelit” (0.83). Users express intent in their own words; the system translates to precise, verifiable concepts.

Together, the systems achieve what neither could alone: the Taste Graph provides statistical rigor, aggregation, and provenance; vector embeddings deliver semantic flexibility and linguistic understanding, creating explainable intelligence that operates at production speed.

How the Two Systems Work Together

Understanding this architecture becomes clearer by examining how these layers work together in practice. When a user searches for “romantic Italian restaurant in Brooklyn for an anniversary dinner” (our example from earlier), the system executes a four-step workflow that seamlessly integrates both components:

- Extract concepts: The system identifies key concepts embedded in the user’s request: what makes a place romantic, what defines a dinner venue, what atmosphere they’re seeking.

- Map to attributes: Vector search finds semantically equivalent concepts in our Taste Graph: “romantic” maps to “Date Night” (0.91 similarity), “Intimate Atmosphere” (0.88), and “Candlelit” (0.83).

- Find matching venues: The system traverses the knowledge graph to find venues with strong affinities to these attributes, each carrying pre-computed TF-IDF confidence scores that quantify how distinctive these characteristics are for each venue.

- Generate justifications: Final results include contextual explanations citing specific user tips (“15 users mention ‘romantic atmosphere’ including: ‘The candlelit tables are incredibly romantic…'”) combined with ranking signals like “#2 for Italian in Brooklyn.”

See Ask API in action in the Foursquare Superlocal app in the video below:

The Future of Conversational Place Discovery

The Ask API represents our vision for conversational, contextually-aware place discovery—a system that understands not just what you ask for, but what you mean. We’re eliminating the cognitive burden of learning system vocabularies, moving toward a future where asking “where should we go?” becomes as natural as asking a knowledgeable local friend.

You can experience this natural language search in action through Superlocal, our consumer app built on the Ask API, where users are already discovering places through conversation rather than filters, or on Swarm, bringing natural language search to millions of users exploring their cities.

Get Started with the Ask API

The Ask API is currently available to enterprise customers. Visit our Docs site to explore the Ask API documentation and contact our team to request access for your application.