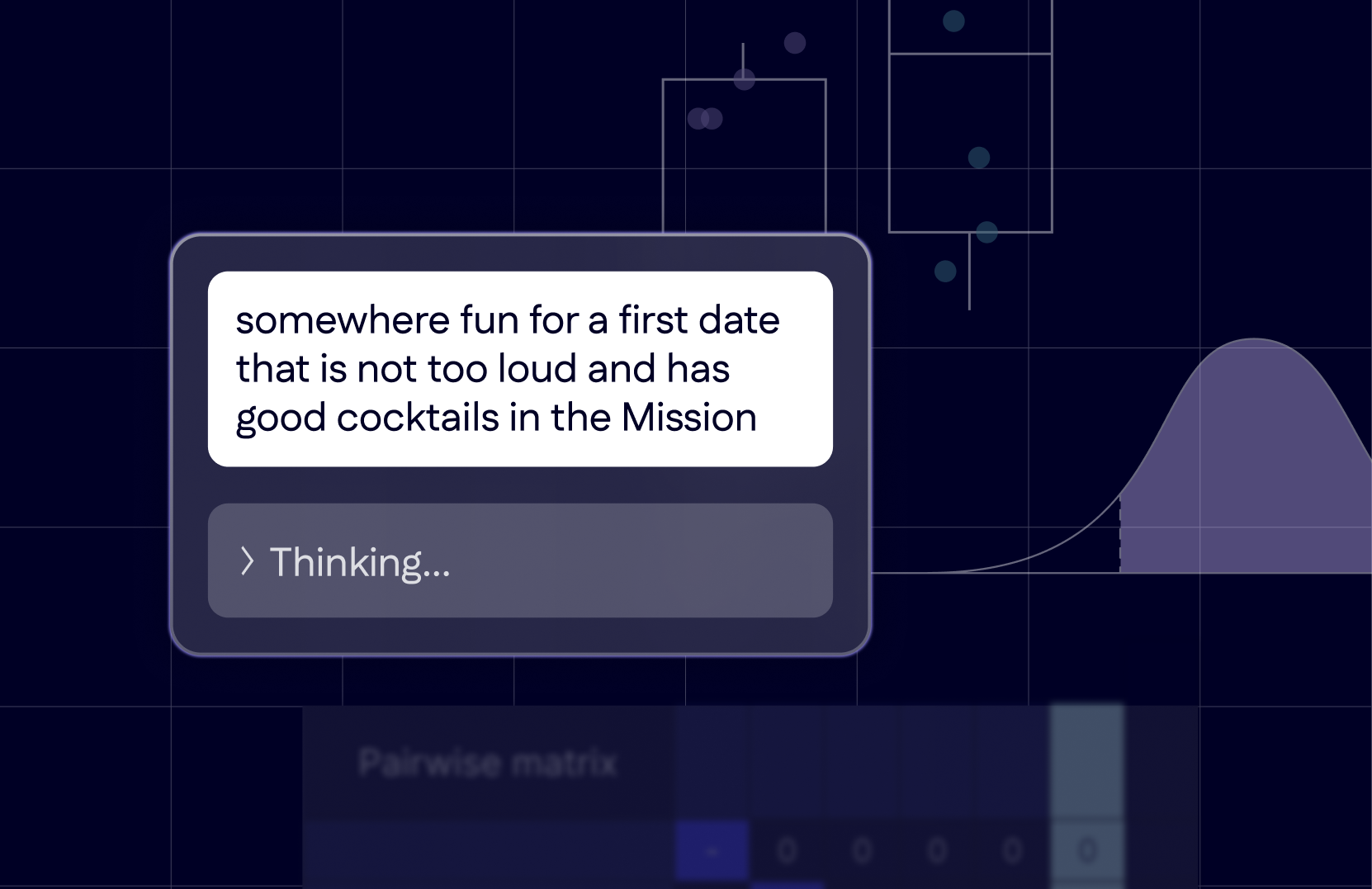

The “old retail playbook” is officially obsolete. As we head into the 2026 back-to-school season, AI-driven shopping habits and shifting economic priorities have fundamentally rewritten the consumer journey. To win this year, retailers must move beyond broad-reach digital tactics and anchor their strategies in the precision of real-world behavior.

Location data remains the most accurate reflection of intent, providing the insights necessary to drive incremental outcomes in an increasingly competitive landscape. In this blog, we’ll dive into the trends shaping this season, the privacy-forward targeting tactics driving personalized advertising, and why measuring real-world outcomes is a critical component of defending market share as foot traffic patterns decline across retail categories.

Let’s get into the three steps retailers can take today to win the 2026 back-to-school season:

Step #1. Understanding 2026 Consumer Behavior

As we head into the 2026 back-to-school season, the traditional shopping “sprint” has officially evolved into a complex marathon. Driven by a mix of persistent inflationary pressure, the rise of AI-assisted shopping, and a structural shift in how shoppers engage with physical stores, today’s consumer is more intentional than ever before.

To craft an effective 2026 back-to-school campaign, retailers must look beyond traditional August peaks and align with these three shifts in shopper behavior:

| Earlier Starts and Extended Timelines | Back-to-school shopping in 2026 will begin as early as May and stretch into late September as households spread out purchases to manage budgets and hunt for specific deals.

Source: NRF |

| The “Phygital” Conversion Gap | Shoppers move fluidly between digital, AI, and in-store channels to research and buy. But with 60% of Google searches now ending without a click, the in-store visit becomes the critical, measurable conversion event.

Source: Sensormatic¹, Capital One Shopping² |

| Value-Driven Brand Switching | Brand loyalty in 2026 is weakening as price sensitivity takes priority. While shoppers plan earlier, they are increasingly willing to switch brands if a better deal appears at the point of purchase.

Source: InMar¹, PwC² |

These shifts represent a fundamental change in the “math” of a retail campaign. When research happens behind AI interfaces and loyalty is won or lost at the shelf, traditional broad-reach tactics are no longer sufficient. To win, retailers must first be able to identify high-intent “mission” shoppers in real time and guide them across the finish line.

Step #2. Reaching the Right Audience in a Value-Driven Market

Persistent economic pressures have transformed the back-to-school journey into a highly intentional mission for the modern household, with a majority of consumers willing to trade down to brands that offer better value.

Because these shoppers are driven by specific needs, your strategy must pivot from static profiles to real-world intent. Foursquare’s location suite helps you identify and influence these specific shopping missions as they unfold:

- Identify Intent with Foursquare Audience: Use historical visit data to identify specific shopper archetypes. Are they “Discount Devotees” who prioritize budget-friendly retailers, or “Big Box Loyalists” who prefer the one-stop-shop convenience of a warehouse club? For example, target parents who frequent thrift and resale shops (a rising 2026 trend) with digital ads promoting your brand’s durability and long-term value, locking in their consideration while they are still in the research phase.

- Influence the Choice with Foursquare Proximity: Capture the “Decision Maker” in real time. Use precise geofencing to reach consumers while they are physically in-store or at a competitor’s location. This is the moment they are actively comparing prices. Triggering a “Value-Match” or a “Convenience-Bonus” promotion via mobile ad placements at this exact moment can sway a shopper who is on the fence.

- Convert and Retain via Movement SDK: Turn your mobile app into a conversion engine by integrating real-time location awareness. By integrating Foursquare’s Movement SDK, you can send hyper-personalized push notifications based on a user’s real-time location context. For example, when a high-value loyalty member enters your store for their back-to-school mission, you can serve a custom in-app offer—“Welcome back! Get $10 off your $50 supply haul today”—directly to their phone, turning a routine trip into a high-conversion experience.

By balancing these three levers, retailers can move beyond broad targeting to align with specific shopping missions, delivering relevant messages that drive real-world results.

Step #3. Measuring Campaign Effectiveness Amidst Foot Traffic Declines

While total back-to-school spending is projected to remain near record highs at approximately $38.8 billion (NRF), the nature of the customer journey is changing. The 2026 journey is no longer a linear path; it’s hyper-fragmented. A single “trip” might start with an AI-powered research session, move to a virtual try-on, convert to a Buy Online, Pick Up In-Store (BOPIS) order, and end with an impulse buy at a physical store.

This fragmentation makes it much harder for retailers to isolate what media or channel actually drove a conversion. This lack of clarity is particularly challenging as we see year-over-year declines in foot traffic across several key retail categories. While total spending is strong, shoppers are becoming more efficient, often visiting fewer stores but with significantly higher intent. In an environment with fewer trips to go around, every visit becomes a “must-win” moment.

With digital signals diminishing and fragmentation the new norm, advertisers can no longer afford to fly blind. Foursquare Attribution acts as the connective tissue across this fragmented path-to-purchase. By connecting digital touchpoints to real-world outcomes across all channels, including digital, audio, OOH, CTV, social, and more, retailers can:

- Identify the true driver. Pinpoint exactly which media tactic cut through the noise to not only put a customer in your aisle, but take them all the way through the checkout line.

- Optimize for intent. Real-time campaign results allow for in-flight optimizations, so you can shift budget toward the channels driving the highest incremental “mission-driven” outcomes rather than chasing empty reach.

- Defend your market share. When overall foot traffic is declining, focus on optimizing your campaign for the pockets of success. This allows you to defend your market share, even as the total category volume shrinks.

Take Action Today

As the 2026 back-to-school season accelerates, the winners will be those who optimize for real-world outcomes. In a year defined by fragmented customer journeys and more selective, intentional shoppers, success depends on moving beyond “clicks” to influence and measure the final purchase mission.

Schedule a meeting today to explore how Foursquare’s location suite can help you navigate 2026’s unique retail challenges and turn declining foot traffic into increased market share.